Using Tracing to Resolve Performance Issues in Apache Ignite

Performance troubleshooting becomes more challenging when an operation spans multiple processes. Every operation that is executed against an Apache Ignite cluster spans several processes. The operation is started on the application and is processed by one or more Ignite server nodes. Tracing is a well-known technique for performance troubleshooting, records the request’s journey from start to finish and provides essential timing details so that you can quickly resolve bottlenecks and hot spots in your cluster.

In this part of the tutorial, you learn how to use GridGain Nebula tracing capabilities to observe the execution steps of distributed Ignite transactions that are generated by the application that is used in the tutorial.

Enable Tracing for Transactions

In Ignite, tracing is disabled by default to ensure that the cluster does not dissipate its resources by collecting traces that are of no interest to you. It’s assumed that you will turn tracing on manually for a specific type of operation whenever you observe performance degradation.

Now, go ahead and enable the tracing of distributed transactions in Ignite:

-

Switch to the Tracing view in Nebula.

-

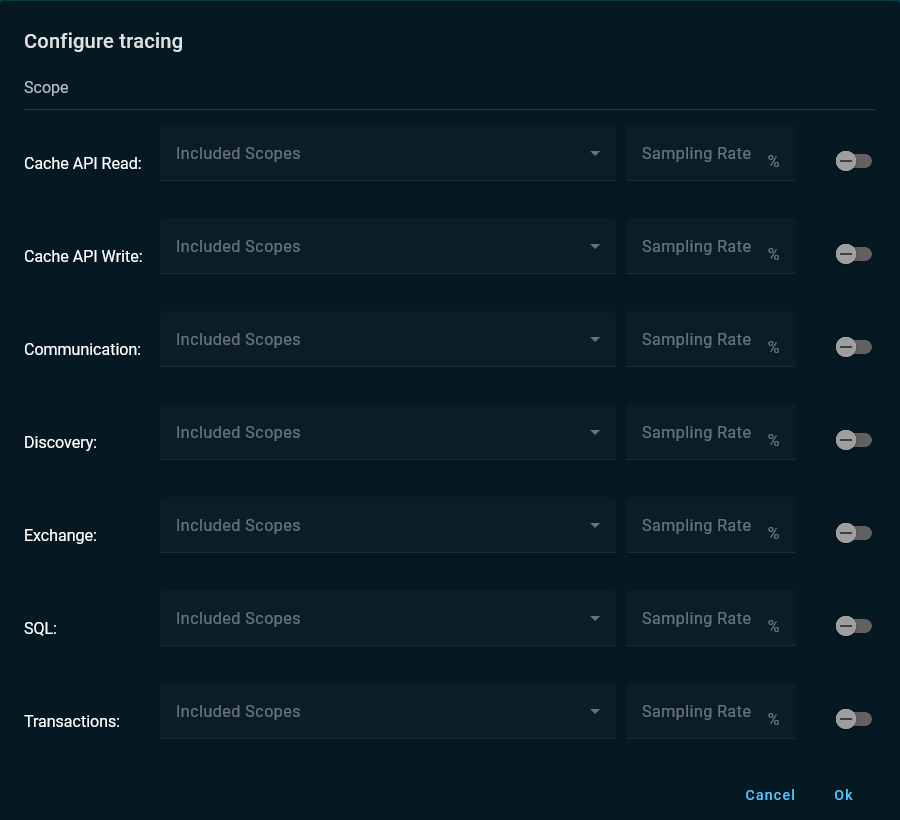

Click Configure Tracing.

-

Enable Transactions tracing, select

Cache API Writescope, and change the Sampling Rate field to30%. -

Click Ok to accept the changes.

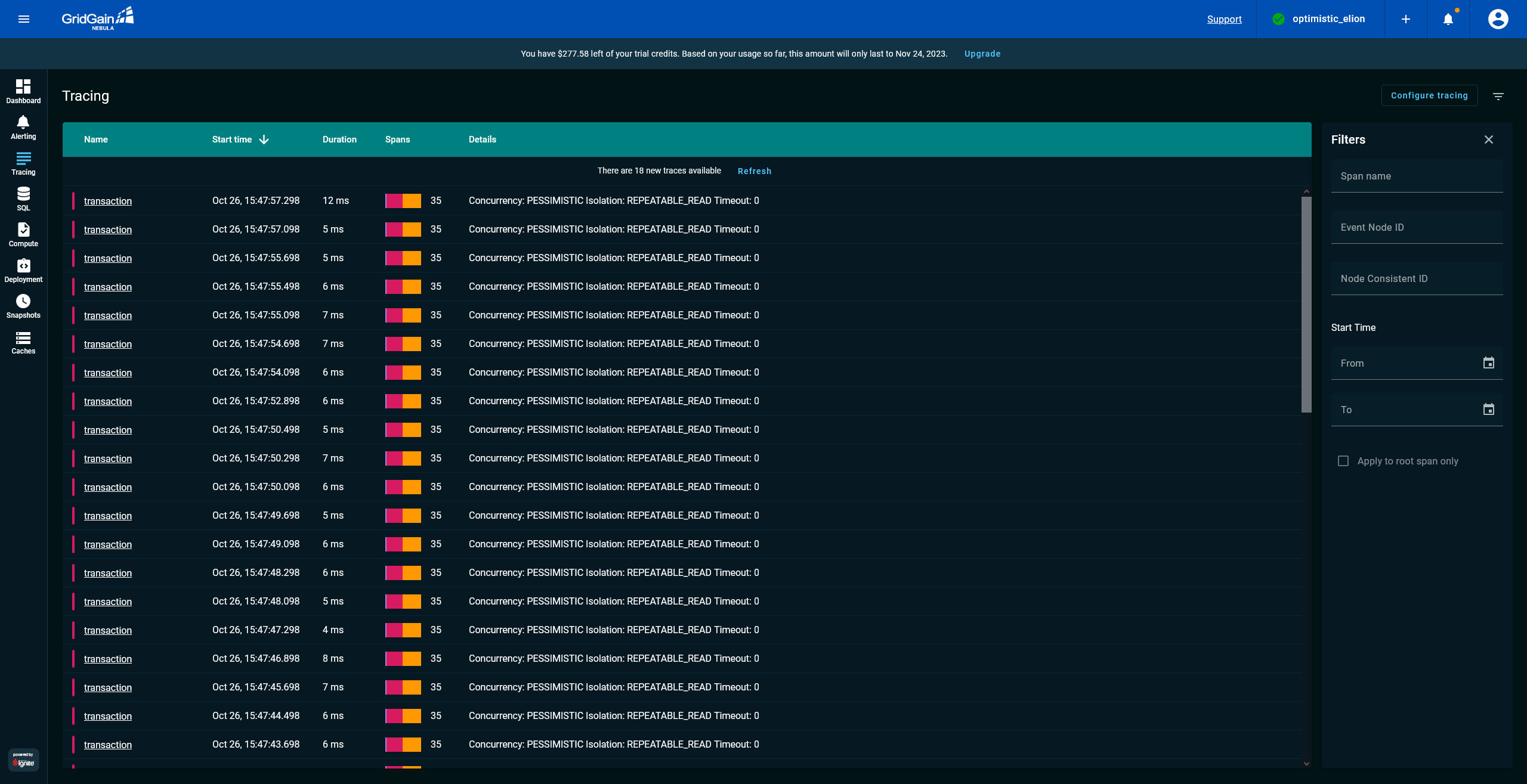

When tracing is enabled, GridGain Nebula intercepts transactions' traces. Open the Tracing screen of Nebula to see a list of already recorded traces:

Analyze Tracing Sample

Ignite uses the 2-phase-commit protocol (2PC) for its transactional engine. With tracing in place, you can observe how a transaction spans multiple nodes, how the commit splits into several steps and, most importantly, how much time is required to complete each step.

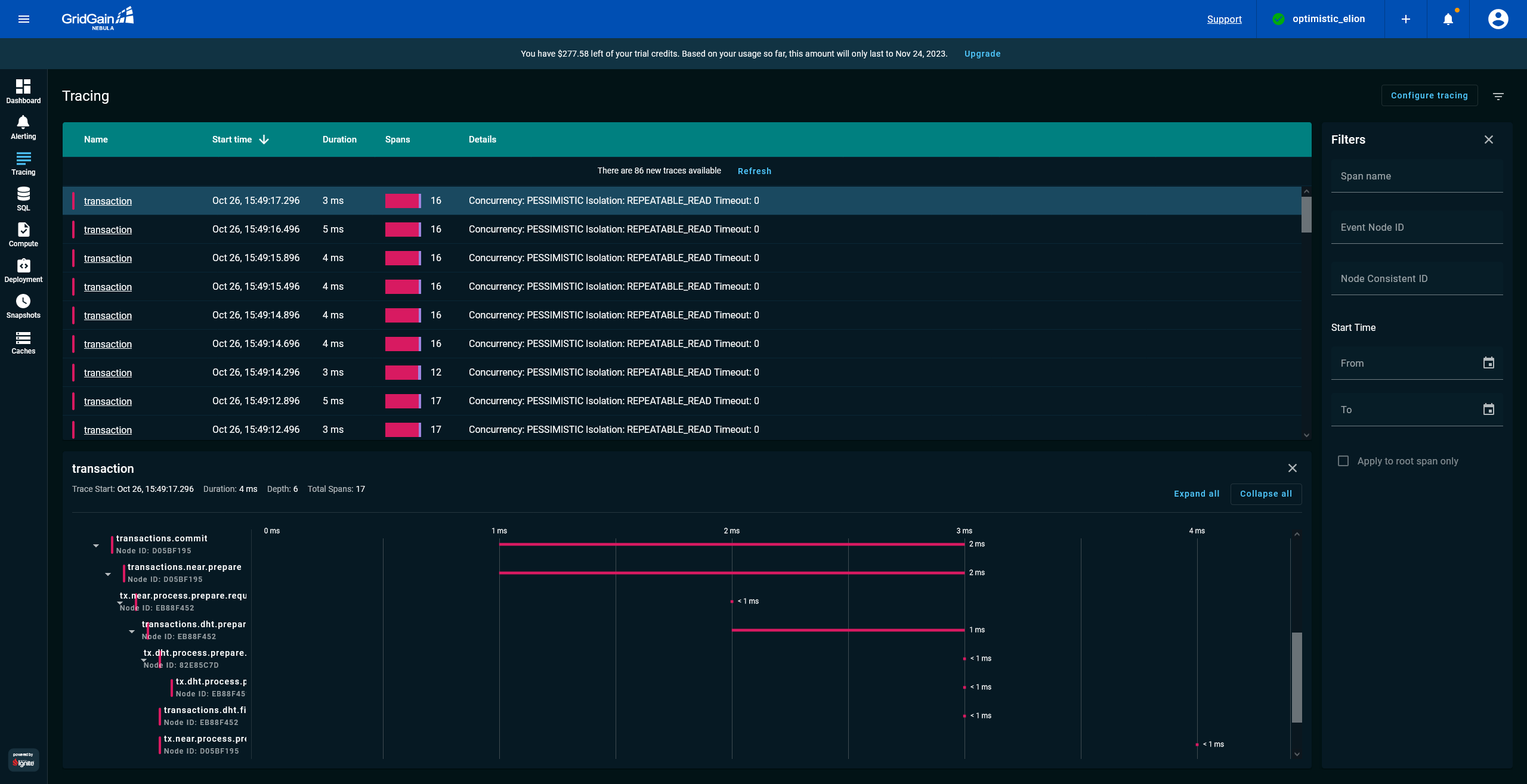

In Nebula, select a trace from the list of traces and unfold the transactions.commit sub-tree as shown in the following screenshot:

The screenshot highlights three steps (also known, in tracing terminology, as “spans”) of the commit phase:

-

transactions.commitspan: The commit is initiated by the application that is connected to the cluster via a client node. The id of the client node isB882C0A0. -

tx.near.process.prepare.request: The 2PC began executing the prepare phase of the protocol, and the request arrived in the primary node, which keeps the record that is being updated by the transaction. The id of the primary node isD6CEAFA2. -

tx.dht.process.prepare.request: Because the cluster keeps a backup copy of every record, the primary node (D6CEAFA2) sent the prepare request to the backup node that keeps the copy. The id of the backup node is7410F9DA.

Presently, there is no performance degradation, and all transactions are being committed timely. However, if any hot spot or bottleneck appears in production, you will know how to discover it with tracing.

Disable Tracing for Transactions

Before you proceed with the tutorial’s next part, disable tracing for transactions:

-

Go to the Tracing view.

-

Click Configure Tracing.

-

Disable Transaction traces.

-

Click Ok.

What’s Next

Complete the next part of the tutorial, where you learn how to back up and restore the cluster:

© 2026 GridGain Systems, Inc. All Rights Reserved. Privacy Policy | Legal Notices. GridGain® is a registered trademark of GridGain Systems, Inc.

Apache, Apache Ignite, the Apache feather and the Apache Ignite logo are either registered trademarks or trademarks of The Apache Software Foundation.