Characteristics, Types & Components of an Event Stream Processing System

(Note that this is Part 1 of a three-part series on Event Stream Processing. Here are the links for Part 2 and Part 3.)

Like many technology-related concepts, Streams or “Event Streaming” is understood in many different contexts and in many different ways such that expectations for Event Stream Processing (ESP) vary widely. In this article we discuss the basic concepts that make up streaming, enumerate a set of functional capabilities within this domain, and organize a set of technical components to meet the functional capabilities of an Event Stream Processing solution.

Background

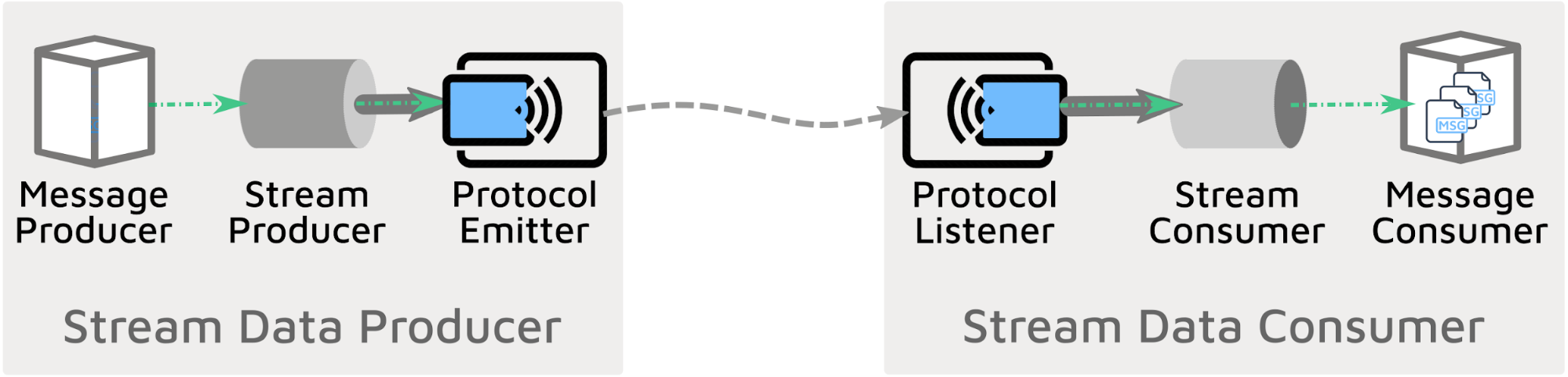

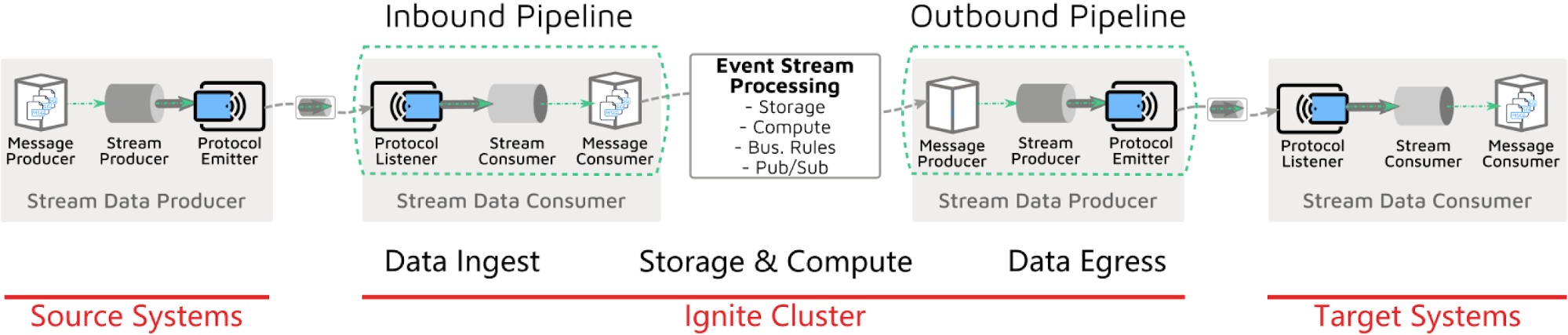

At the highest level, an information stream is the data that flows continuously between a producer and one or many consumers. In the diagram below, we see the Stream Data Producer and Stream Data Consumer.

Here we start to separate multiple functions within the Streaming domain, in particular:

- Message Producer and Consumer - the business aspects of a stream of messages

- Stream Producer and Consumer - the technical elements of coordinating messages together for proper transmission between producer and consumer.

- Protocol Emitter and Protocol Listener - the transport elements that transmit the stream of business messages (i.e., protocol emitters and listeners should understand or support the semantics of a message stream). These elements must also account for technical stream processing, including buffering, chunking, setup & tear down, optimization, etc.

We will call the combination of these three components a pipeline and distinguish between inbound and outbound variants.

Streaming Context

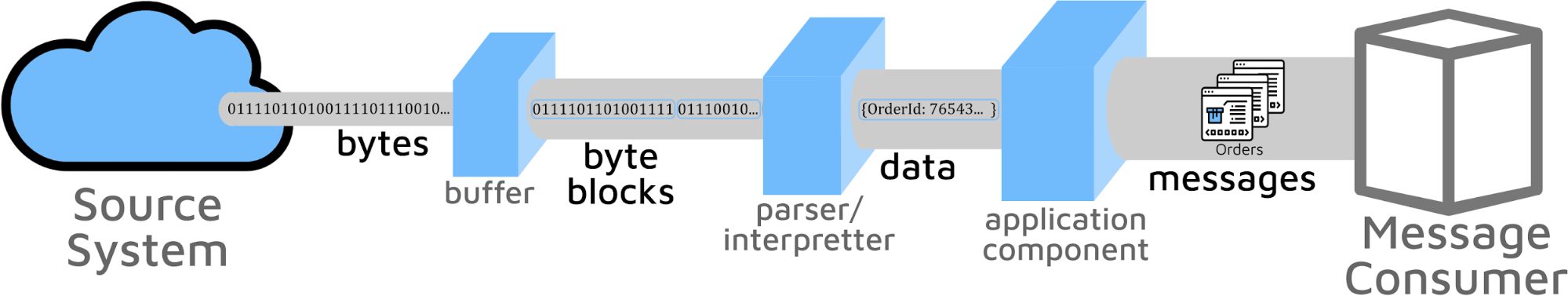

In an end-to-end stream-based solution, there exist multiple contexts of “streaming”. If we examine one pipeline, like data ingestion of the Stream Data Consumer, there is a progression of functionality ranging from technical byte I/O to higher order components that deal with business messages handling. This is shown below:

While all stream solutions must physically send bytes on a wire or some medium, the chosen components may not expose these low-level semantics to the solution developers. Different streaming components expose their facilities via APIs that are more or less message oriented or byte & protocol based. These two extremes reflect two different streaming contexts:

Protocol Byte Stream - This is a stream of bytes that is a protocol-centric definition of a stream by which messages can be thought of as a collection of elements, can be read individually or iterated through, can address the stream source, and can be organized or operated together in a pipeline between components. We can look at the Java API and its streams library implementation within many specific protocols. When streaming is implemented for any particular protocol, that protocol can be thought of as stream-enabled.

As an example, one may be able to open a stream on a file, or on a TCP socket, or message queue to receive data because these all have the Streaming API implemented. The benefits of interacting with a stream at the byte or byte buffer level can be features like improved speeds, and reduced memory consumption as we find in streaming parsers.

Business Message Stream - a stream of business messages that is characterized by an ongoing flow of information and has features like ordering or sequence, message groups (e.g. a group of messages to be taken together as in a transaction). The stream of information is often named as a topic or subscribed to via this topic or in a queue.

An example at this level might be a Kafka-based integration where JSON-encoded business objects are communicated as messages on a topic. The benefit of this approach is that the component (e.g. GridGain certified Kafka Connector) does all protocol (e.g. TCP socket), session handling, buffering, enveloping, transaction handling, and message parsing to directly deliver a fully constructed message to the consuming client to reduce development time.

Some components may need low-level streaming capabilities with significant communication protocol handling, message enveloping, payload parsing, and other processing to deliver a business message, whereas other components may hide most or all of the underlying technical streaming components and simply deliver a stream of business messages. A streaming solution may have elements of both types as dictated by the particular business systems and messaging infrastructure in use.

Stream Event Messages

Inherent in the name, information in the form of data bytes or fully formed business messages arrive as a “stream”, but the implication is that these arrive as “events”. The consumer may want or subscribe to this stream, but the initiator of any particular instance of information is the producer, and the consumer should think of the stream as a stream of events. It is for this reason that event handling infrastructure is inherent in any ESP solution.

Event Message Types

For stream event messages to be used in a solution, facilities and patterns to handle the different types of events must be available. Meaningful interactions can only occur if the producer and consumer agree on the types and contents of these event messages. In classifying event messages, we see the four following types:

- Event Notifications - the smallest possible indication of an event occurring, with an identifier to the full context

- Data Change Events - the indication of and context of what has changed at this time

- Full Business State Transfer - like the above data change event, but including a more complete rendering of the state of the object(s) involved in the underlying business event

- Commands - this is a message arriving as an event but reflecting a desire for something to happen, which is different than the preceding three types that reflect something that has happened

Event Interaction Types

Most recent event architectures have focussed on decoupling producers from consumers to deliver scale and reduce brittleness of the solution with a fully asynchronous interaction. However, this approach means there is a loss of broader context situational awareness that synchronous or partial synch can deliver. The increase in message throughput that message decoupling delivers can bring more complex business orchestrations to handle simple features like message acknowledgements, message acknowledgements with receipts, message response with reply context. The broad range of message interactions includes the following interaction types:

- Event-only or “Fire & Forget” (Asynchronous messaging): For the asynchronous Fire & Forget interaction type, the producer writes the event message onto the transport and only the success of writing to the transport is exposed to the producer. This interaction can be thought of as “send successful”, and delivers the most decoupled and shortest interaction times. This is typical of the interactions that come from using a messaging transport like JMS or Kafka.

- Event-Acknowledgement: For Event-Ack, the producer is returned an acknowledgement of message delivery and this acknowledgement may include the related message id on the receiver side. This interaction can be thought of as “delivery successful”. With the consumer’s acknowledgement message id, the producer has the ability to track the delivery and processing in the target system. The acknowledgement may also include a message delivery receipt (which may even be signed for delivery non-repudiation). The Event-Ack interaction will take more time as it requires the message to be retrieved off the transport by the consumer, but it does not require the consumer to actually process the message. This is the typical approach in B2B message exchanges like AS2 where delivery receipts are critical.

- Event-Reply (Synchronous messaging): Like the Event-Ack interaction type, the consumer has received the message, but instead of simply returning an acknowledgement message, in this interaction the consumer has processed the message and can reply with context from the receipt and processing of the message, think “message processed successfully”. This synchronous interaction holds resources for the longest time and thus is least scalable but is the simplest to implement for a single point to point integration. This interaction type is usually implemented over synchronous transports like TCP or HTTP.

Relative to Streaming each of these event interactions will require different handling strategies, and these are strongly correlated to the messaging transports. So a stream of event messages received from Kafka will be different than a stream of messages received from a TCP socket and require different stream processing capabilities.

Synthetic Events

There often exists a mismatch between system protocols (for example, those protocols that only support request/response) and desired information flow patterns (event, or event plus reply). For example, a file placed on a remote FTP server by a message producer may be thought of as the business event that should drive event-based processing of the contained message(s) in the file. However, the FTP protocol does not inherently have a call-back or subscription facility such that consumers could be notified of the arrival of new files/messages. However, an event can be synthesized for this use case by overlaying a poll-based query onto the request-based protocol. In other words, by checking at some frequency for the arrival of new files (and keeping track of past context for future queries), one can create an “event” from the specific query that finds the new file. By arbitrarily reducing the polling frequency, one can make these artificial, synthetic events as close to a real-time stream as to become nearly equivalent to the “real” events. There is an obvious cost to this arbitrary speeding up of the polling and may limit the ability to become truly real-time, especially by the definition of some businesses.

Event Stream Processing Solution Capabilities

A consequence of handling fine-grained or coarse-grained streaming context and supporting the event-based paradigm is that an ESP solution must have the following capabilities:

Protocol Adapters/Connectors

Adapters or connectors that can receive events, subscribe to and receive events, or synthetically poll for events from source systems. This should be a long list and include components across the modern technology landscape, from pure technology/communication protocol adapters like HTTP, TCP, JDBC/ODBC, SMTP, AS2, etc. to messaging platforms like Kafka, JMS, MQTT, MQ, etc. and to specific application systems like SAP ERP, Siebel, JDEdwards, and Microsoft Dynamics. Features like chunking, batching, and other performance features can be important for advanced solution delivery.

Apache Ignite comes with a wide range of streaming components to deliver this capability, as seen on the Data Loading & Streaming page.

Event Filtering & Semantics

The ability to define or filter out from the entire stream those characteristics that make up an event of significance. This can include the ability to define context relative to individual or related events. This could be as simple as subscribing to a particular subject, like “foreign exchange trade type”, or applying a filtering predicate like “Orders greater than or equal to $10,000”. It could also be more complicated and include event-based predicates like “WITHIN” as in “Orders WITHIN the last 4 hours having count(Country) Greater than 1”.

Event Processing & Event Dispatching

When an event of interest is received from a source system, the solution must be able to handle these events in a number of ways that best fit the desired solution goals. Example goals may be as simple as persisting the event data, or it may include enriching the event data, to running business rules for how to handle specific events, or as complex as running machine learning algorithms to classify, predict or forecast complex or composite events from basic business events.

Apache Ignite supports transactional data injection for persisting data via JCache JSR 103 standard or the extended Ignite Cache API that supports Atomic or Transactional writing to a cache along with a wide range of advanced calls like getAndPutIfAbsent(), etc. With Ignite’s Data Streamer API there is also at-least-once-guarantee semantics to ensure that the message injected into the cluster will not be lost. Finally, message dispatching can make use of APIs to call the Ignite Compute Grid, or the Ignite Service Grid, enabling advanced integration with complex event processing solutions using business rules engines like Drools, or Ignite’s own Machine Learning (ML) and Deep Learning (DL) facilities.

Apache Ignite provides a Continuous Query capability for registering to, filtering for and processing information injected into the storage layer of the cluster. Ignite also provides a proactive Cache Interceptor API to enable change detection, comparison and rules interjection. In parts two and three of this series, we will go into further details on these two capabilities and create a sample event streaming solution using Ignite Continuous Query.

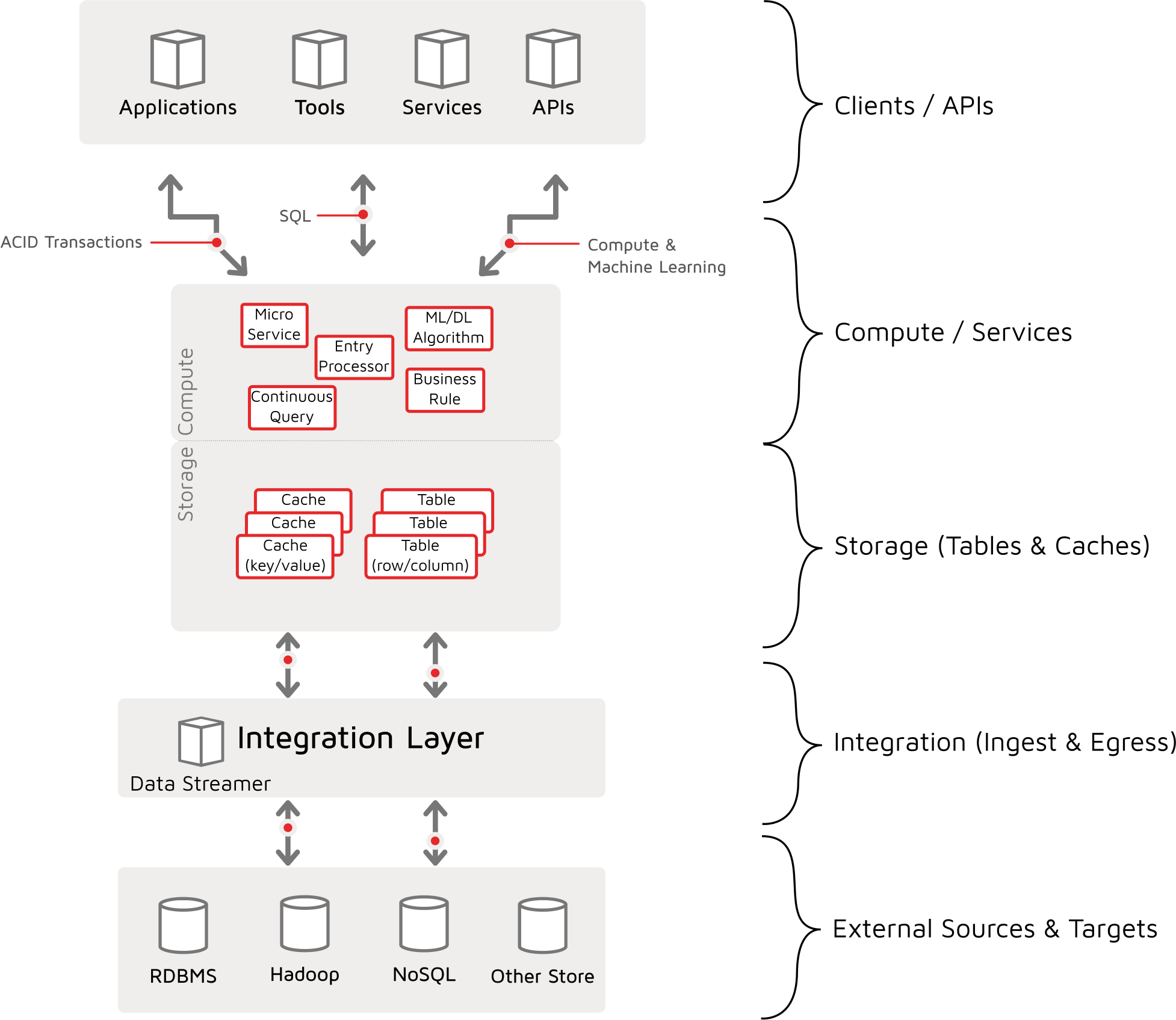

Ignite Digital Integration Hub and Streaming

The Digital Integration Hub is often thought of as supporting APIs and Microservices consumers. However, the bottom four layers provide storage and computing capabilities and are supported by integration facilities to interact with source and target systems.

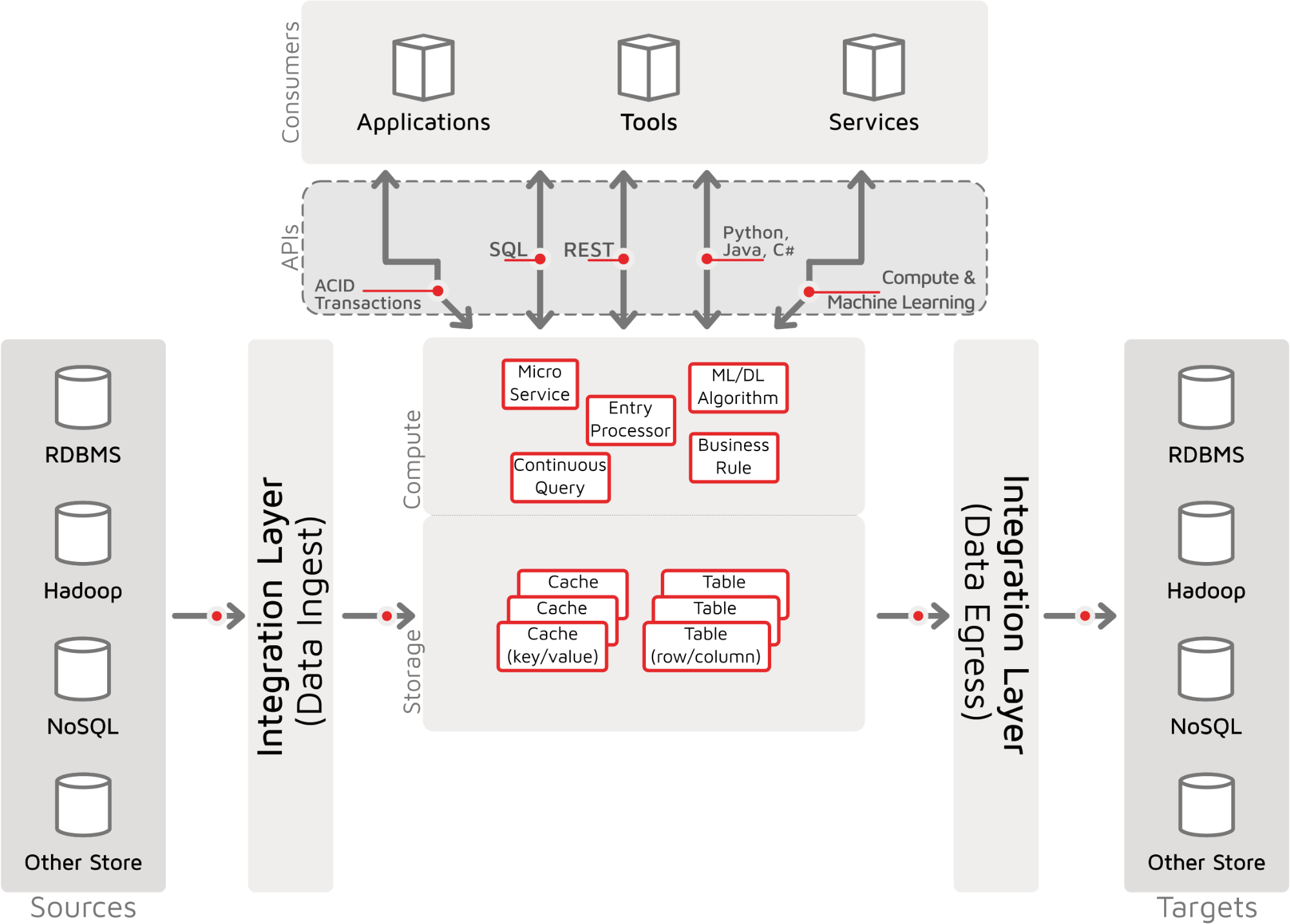

By taking the Integration layer and separating out data ingest from data egress, we get a better view of the system landscape that depicts the data flow from source systems, through the digital hub, to target systems. The Apache Ignite Digital Integration Hub viewed with its “Horizontal” architecture is shown below. In this broader definition, data ingest into Ignite, and data egress from Ignite can be seen as channels or pipelines that may need or leverage stream producer and stream consumer patterns. Additionally, the core storage and compute facilities must accommodate streaming features to fully capture the benefits of a real-time streaming solution.

Data Ingest can be seen as connecting a source system stream producer to Ignite as a stream consumer in an Inbound Pipeline. Similarly, an Outbound Pipeline starts with Ignite as a stream producer connected to a target system and its stream consumer. With these two pipelines, below is a more detailed picture of the end-to-end system landscape throughout the inbound pipeline, internal event handling and outbound pipeline:

Summary

In this article, we have looked at the core elements of a real-time, event stream handling scenario. We first reviewed what it means to be a consumer or producer of a stream. We saw how different agents and components view streams in different contexts, from high-level business message flow to lower-level technical protocol and message enveloping mechanisms. From an Ignite centric view of Stream Processing, we enumerated the many capabilities that are needed in an inbound streaming pipeline, the event handling and dispatching, along with the outbound pipeline. In the next two articles, we will describe how ignite components deliver these capabilities, how they are organized in a pattern to deliver an ESP solution and then we will investigate a key element in event processing, the Continuous Query.