Real-time payments and digital wallets raise the bar for payment infrastructure.

Customers expect money to move immediately. Balances should refresh in real time. Fraud checks must occur before the authorization window closes. And the platform has to hold up during traffic spikes, deployments, and infrastructure failures just as well as it does on a normal day.

That is a tougher architectural shift than it sounds.

Many payment environments were built for overnight batch runs, predictable peaks, and clearer system boundaries. Today, those same environments are being asked to support RTP, wallet funding, account-to-account flows, and partner APIs that never really go quiet.

For payments technology leaders, the challenge is straightforward to describe but hard to solve: deliver an instant experience without introducing new risks to consistency, resilience, or the pace of modernization.

Where the pressure really shows up

In practice, the front end is rarely the hard part. The hard part is ensuring the systems behind it can authorize, persist, and recover transactions within a very tight time budget.

That pressure tends to show up in several places at once:

- Authorize and post transactions in milliseconds:

If balance checks, routing decisions, or posting logic have to bounce across slow integrations, the real-time experience disappears quickly. - Run fraud and risk checks inside the transaction window:

A good model is not much help if it returns after the decision has already been made. - Keep transaction state consistent across systems:

Wallet balances, holds, refunds, reversals, and ledger entries all need to line up, even when volumes spike or infrastructure degrades. - Stay available when conditions are less than ideal:

Payroll runs, promotions, month-end peaks, and failover events are when the architecture is exposed.

Taken together, these demands are what make real-time payments so challenging in environments still anchored to batch-era cores and fragmented data.

Why batch-era architectures struggle with RTP and wallets

Most payment environments still have a recognizable shape: a system of record in the middle, a set of surrounding services, and an integration layer tying together transaction processing, fraud, customer data, and posting.

That pattern has worked well for many payment environments. It can become harder to sustain when every step has to happen continuously, with low latency, and without much room for delayed reconciliation.

Common pressure points include:

- Legacy core systems are optimized for batch, not event-driven or high velocity streaming transaction processing

- Real-time payment flows increasingly rely on streaming and event-driven patterns, but many legacy environments were not designed to process and reconcile events continuously

- Transaction, account, and balance data are spread across disconnected systems

- Integration layers introduce latency at the exact point where milliseconds matter

- Volume spikes create bottlenecks that are difficult to absorb without degrading response times unless backpressure can be applied

- Failover models often preserve availability at the cost of slower recovery or inconsistent transaction state

That is why so many modernization programs bog down. The business asks for real-time services, while the architecture underneath still forces trade-offs among speed, correctness, and resilience.

What a modern real-time payments architecture needs

The teams making the most progress rarely start by replacing their core. More often, they add a real-time operational layer that spans existing systems and removes latency, state management, and failover pressure from the most fragile parts of the stack.

In practical terms, that layer has to:

- Unify transaction, account, and balance data for real-time decisions

- Process high volumes of ACID transactions with predictable low latency

- Support instant authorization, posting, holds, reversals, and balance updates

- Integrate cleanly with event streams, APIs, and existing core systems

- Preserve transaction integrity and recoverability during failures

- Scale horizontally as transaction volumes and payment flows expand

That is the shift that matters. Real-time payments are not just a faster channel; they depend on an architecture that can act on current data continuously and recover cleanly when something goes wrong.

What leading institutions are doing differently

Across the market, leading payments organizations are arriving at a similar conclusion: create a real-time layer around the core so the business can move faster without destabilizing the systems it already depends on.

1. Build a real-time gateway instead of rewriting the core

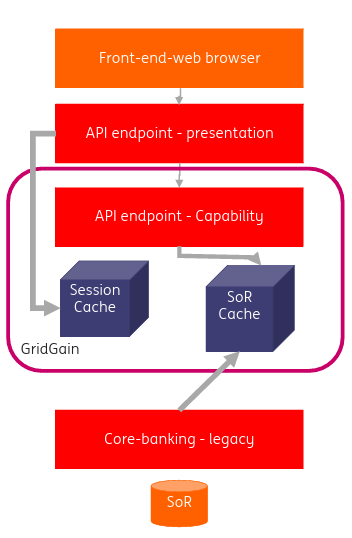

A large European bank approached instant payments by placing a real-time gateway between new payment flows and its existing mainframe engine.

The gateway handled ISO message transformation in both directions, allowing the bank to meet instant-payment timing requirements using the existing core. At the same time, a shielding layer reduced pressure on the mainframe and made payment and account data easier to expose through APIs.

It is a pragmatic pattern: keep the core where it belongs, but move latency-sensitive processing and integration logic into a layer designed for real-time work.

Figure 1: European bank’s shielding of mainframe workloads

2. Create a shared operational data hub for old and new systems

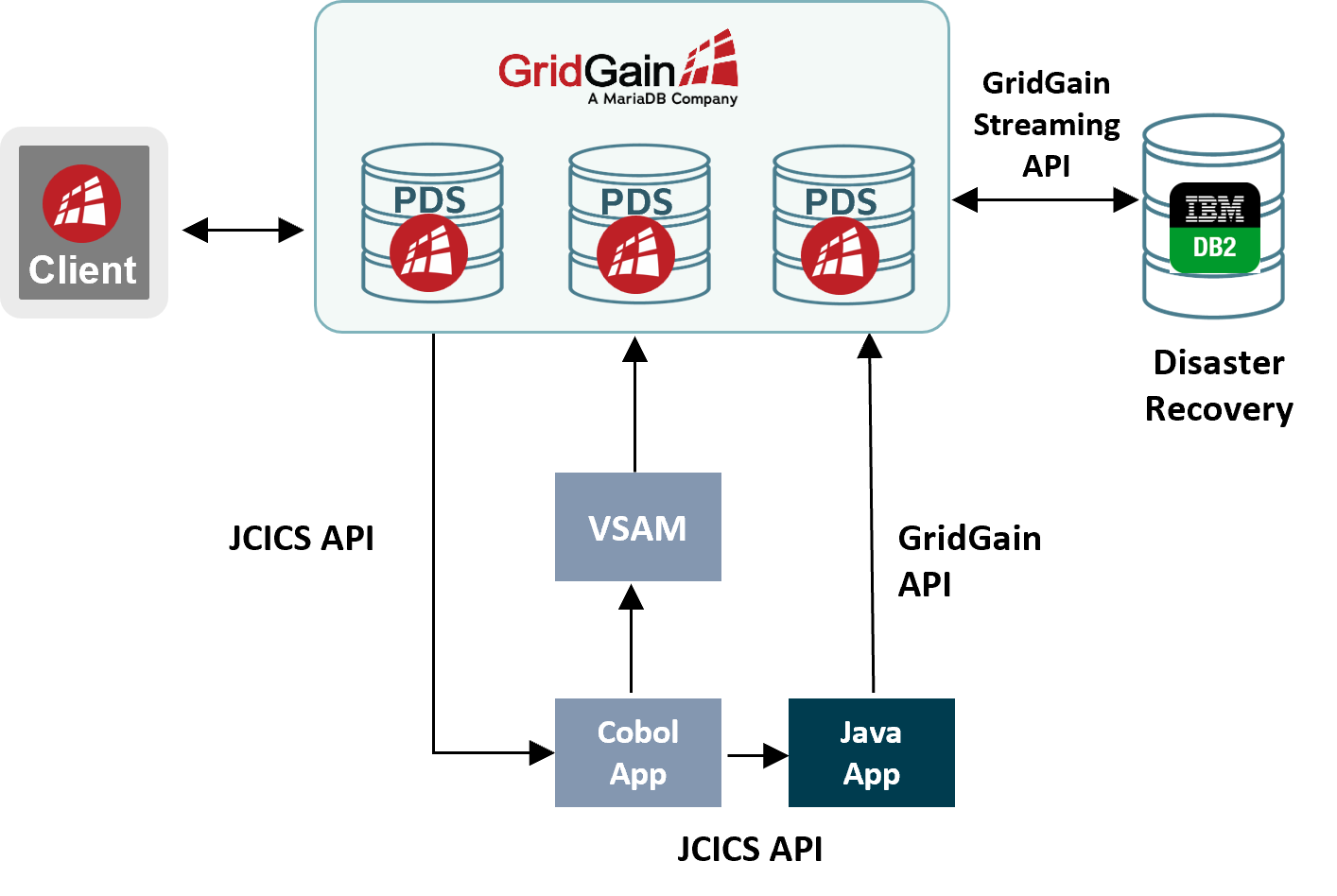

A major global card network used a similar idea in merchant payment processing, introducing a low-latency operational data hub between legacy mainframe workflows and newer Java services.

Figure 2: Global card network modernizing its payment processing

Rather than forcing every application to call back to the same slower systems of record, both legacy and modern applications made calls to a shared real-time layer, while persistence remained in place for continuity and recovery.

That did more than improve performance. It also gave the organization a workable modernization path, helping cut settlement times from days to hours.

3. Move fraud decisioning into the transaction path

Real-time payments also compress the window for fraud and risk decisions.

A financial analytics provider supports transaction-speed fraud scoring by running machine learning models in 10 to 20 milliseconds at a minimum of 25,000 transactions per second, with high availability across active-active environments.

The takeaway is simple: if fraud and risk systems sit outside the transaction path, at scale, network latency from moving data between systems is the source of the delay. In a real-time flow, they have to run on current data and return fast enough to influence the decision.

Where the architecture gets tested

At this point, the demand side is not really in doubt. The harder question is what happens when the platform is under stress.

For example:

- A fraud model has to score before the transaction timeout

- Balances and ledger entries must update immediately

- A data center degrades during peak processing

- New wallet flows, RTP volumes, or account-to-account payment traffic add load and complexity

If the answer involves delayed reconciliation, manual intervention, or a failover model that keeps systems up but leaves transaction state messy, the weak point is not the channel. It is the architecture behind it.

Why an always-on operational layer matters

For payments technology leaders, the priority is to add real-time processing and resilience around existing payment systems without turning modernization into a multi-year rewrite.

In most cases, that means building a real-time operational layer that can manage current-state data, support low-latency transaction processing, and stay consistent through failure and recovery.

That approach gives banks, payment processors, card networks, and fintechs room to launch new real-time payment and digital wallet services without destabilizing the platforms they already run.

More importantly, it is what turns an instant-looking experience into one that is actually built to perform in real time.